Congressional Appropriations Analyzer

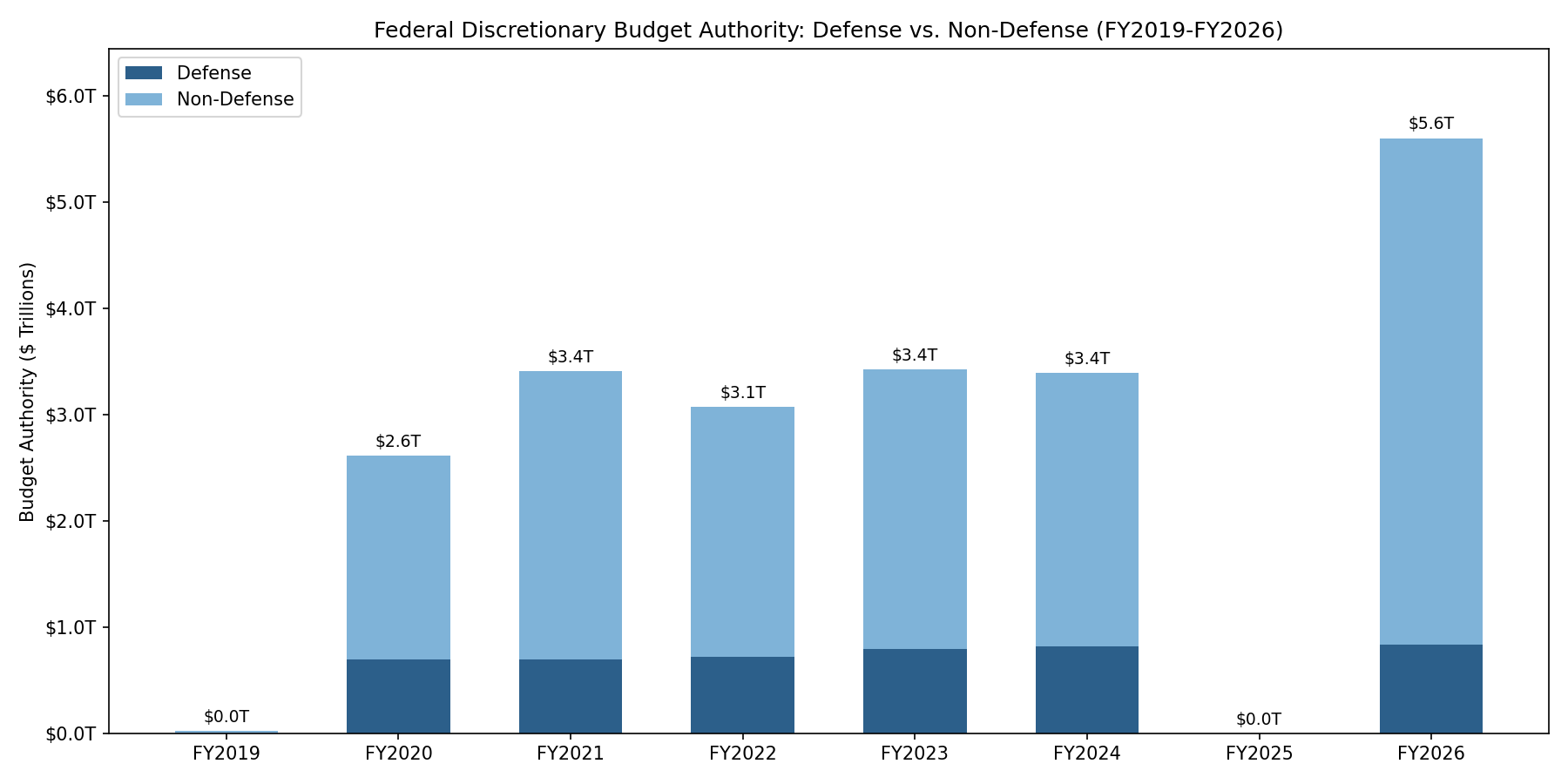

congress-approp is a Rust CLI tool and library that downloads U.S. federal appropriations bills from Congress.gov, extracts every spending provision into structured JSON using Claude, and verifies each dollar amount against the source text. The included dataset covers 32 enacted bills across FY2019–FY2026 with 34,568 provisions and $21.5 trillion in budget authority.

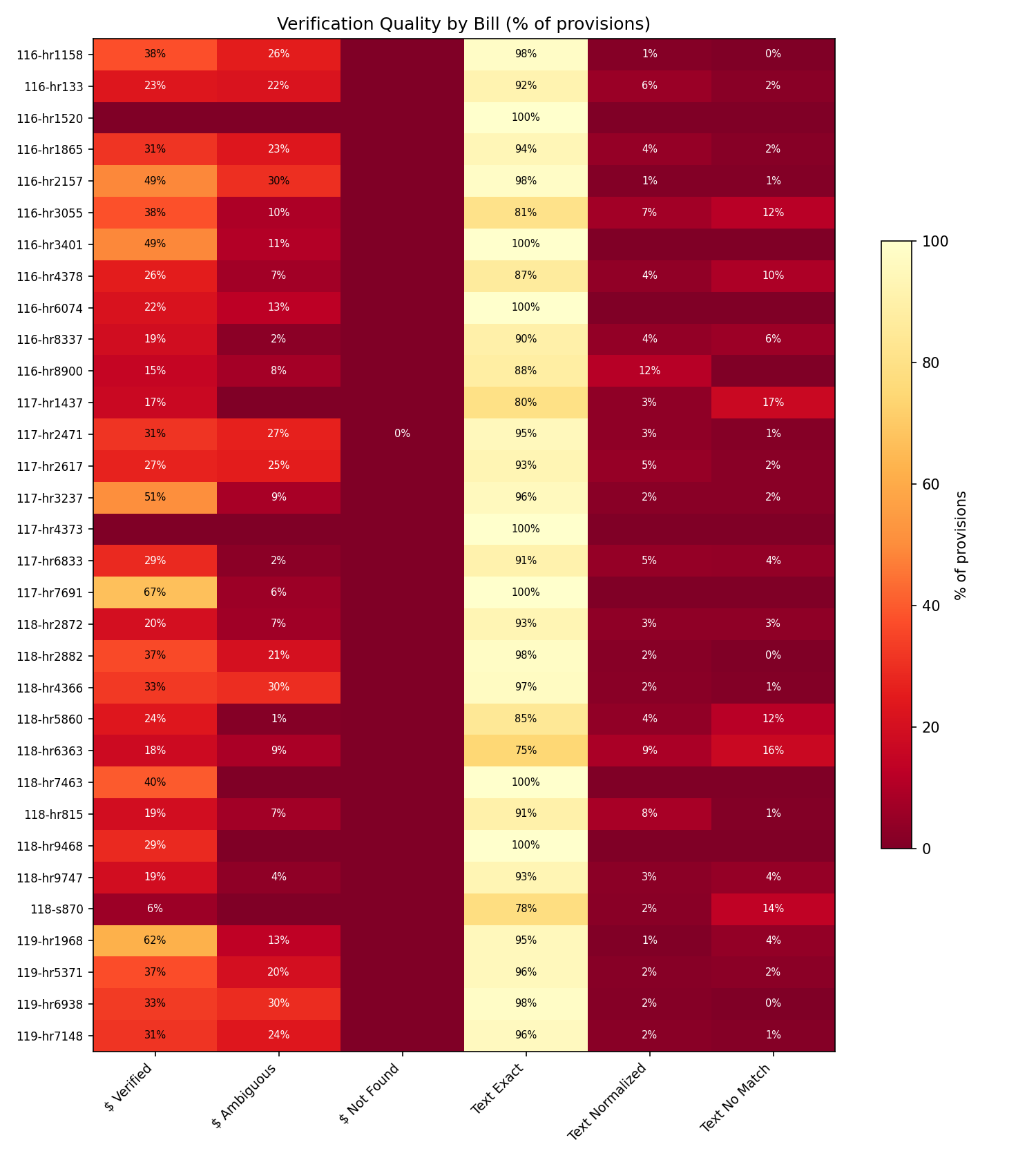

Dollar amounts are verified by deterministic string matching against the enrolled bill text — no LLM in the verification loop. 99.995% of extracted dollar amounts are confirmed present in the source (18,583 of 18,584). Every provision carries a source_span with exact byte offsets into the enrolled bill for independent verification.

Jump straight to working examples: Recipes & Demos — track any federal account across fiscal years, compare subcommittees with inflation adjustment, load the data in Python, and more. No API keys needed.

What’s Included

This book ships with 32 enacted appropriations bills across 4 congresses (116th–119th), covering FY2019 through FY2026. All twelve appropriations subcommittees are represented for FY2020–FY2024 and FY2026. You don’t need any API keys to explore them — just install the tool and start querying.

116th Congress (FY2019–FY2021) — 11 bills

| Bill | Classification | Provisions | Budget Auth |

|---|---|---|---|

| H.R. 1865 | Omnibus (FY2020, 8 subcommittees) | 3,338 | $1,710B |

| H.R. 1158 | Minibus (FY2020, Defense + CJS + FinServ + Homeland) | 1,519 | $887B |

| H.R. 133 | Omnibus (FY2021, all 12 subcommittees) | 6,739 | $3,378B |

| H.R. 2157 | Supplemental (FY2019, disaster relief) | 116 | $19B |

| H.R. 3401 | Supplemental (FY2019, humanitarian) | 55 | $5B |

| H.R. 6074 | Supplemental (FY2020, COVID preparedness) | 55 | $8B |

| + 5 CRs | Continuing resolutions | 351 | $31B |

117th Congress (FY2021–FY2023) — 7 bills

| Bill | Classification | Provisions | Budget Auth |

|---|---|---|---|

| H.R. 2471 | Omnibus (FY2022) | 5,063 | $3,031B |

| H.R. 2617 | Omnibus (FY2023) | 5,910 | $3,379B |

| H.R. 3237 | Supplemental (FY2021, Capitol security) | 47 | $2B |

| H.R. 7691 | Supplemental (FY2022, Ukraine) | 67 | $40B |

| H.R. 6833 | CR + Ukraine supplemental | 240 | $46B |

| + 2 CRs | Continuing resolutions | 37 | $0 |

118th Congress (FY2024/FY2025) — 10 bills

| Bill | Classification | Provisions | Budget Auth |

|---|---|---|---|

| H.R. 4366 | Omnibus (MilCon-VA, Ag, CJS, E&W, Interior, THUD) | 2,323 | $921B |

| H.R. 2882 | Omnibus (Defense, FinServ, Homeland, Labor-HHS, LegBranch, State) | 2,608 | $2,451B |

| H.R. 815 | Supplemental (Ukraine/Israel/Taiwan) | 306 | $95B |

| H.R. 9468 | Supplemental (VA) | 7 | $3B |

| H.R. 5860 | Continuing Resolution + 13 anomalies | 136 | $16B |

| S. 870 | Authorization (Fire Admin) | 51 | $0 |

| + 4 CRs | Continuing resolutions | 233 | $0 |

119th Congress (FY2025/FY2026) — 4 bills

| Bill | Classification | Provisions | Budget Auth |

|---|---|---|---|

| H.R. 7148 | Omnibus (Defense + Labor-HHS + THUD + FinServ + State) | 2,774 | $2,841B |

| H.R. 5371 | Minibus (CR + Ag + LegBranch + MilCon-VA) | 1,051 | $681B |

| H.R. 6938 | Minibus (CJS + Energy-Water + Interior) | 1,028 | $196B |

| H.R. 1968 | Full-Year CR with Appropriations (FY2025) | 514 | $1,786B |

Totals: 32 bills, 34,568 provisions, $21.5 trillion in budget authority, 1,051 accounts tracked by Treasury Account Symbol across FY2019–FY2026.

What Can You Do?

“How did THUD funding change from FY2024 to FY2026?”

congress-approp enrich --dir data # Generate metadata (once, no API key)

congress-approp compare --base-fy 2024 --current-fy 2026 --subcommittee thud --dir data

82 accounts matched across fiscal years — Tenant-Based Rental Assistance up $6.1B (+18.7%), Transit Formula Grants reclassified at $14.6B, Capital Investment Grants down $505M.

“What’s the FY2026 MilCon-VA budget, and how much is advance?”

congress-approp summary --dir data --fy 2026 --subcommittee milcon-va --show-advance

┌───────────┬────────────────┬────────────┬─────────────────┬─────────────────┬─────────────────┬─────────────────┬─────────────────┐

│ Bill ┆ Classification ┆ Provisions ┆ Current ($) ┆ Advance ($) ┆ Total BA ($) ┆ Rescissions ($) ┆ Net BA ($) │

╞═══════════╪════════════════╪════════════╪═════════════════╪═════════════════╪═════════════════╪═════════════════╪═════════════════╡

│ H.R. 5371 ┆ Minibus ┆ 257 ┆ 101,742,083,450 ┆ 393,689,946,000 ┆ 495,432,029,450 ┆ 16,499,000,000 ┆ 478,933,029,450 │

└───────────┴────────────────┴────────────┴─────────────────┴─────────────────┴─────────────────┴─────────────────┴─────────────────┘

79.5% of MilCon-VA is advance appropriations for the next fiscal year — without --show-advance, you’d overstate current-year VA spending by $394 billion.

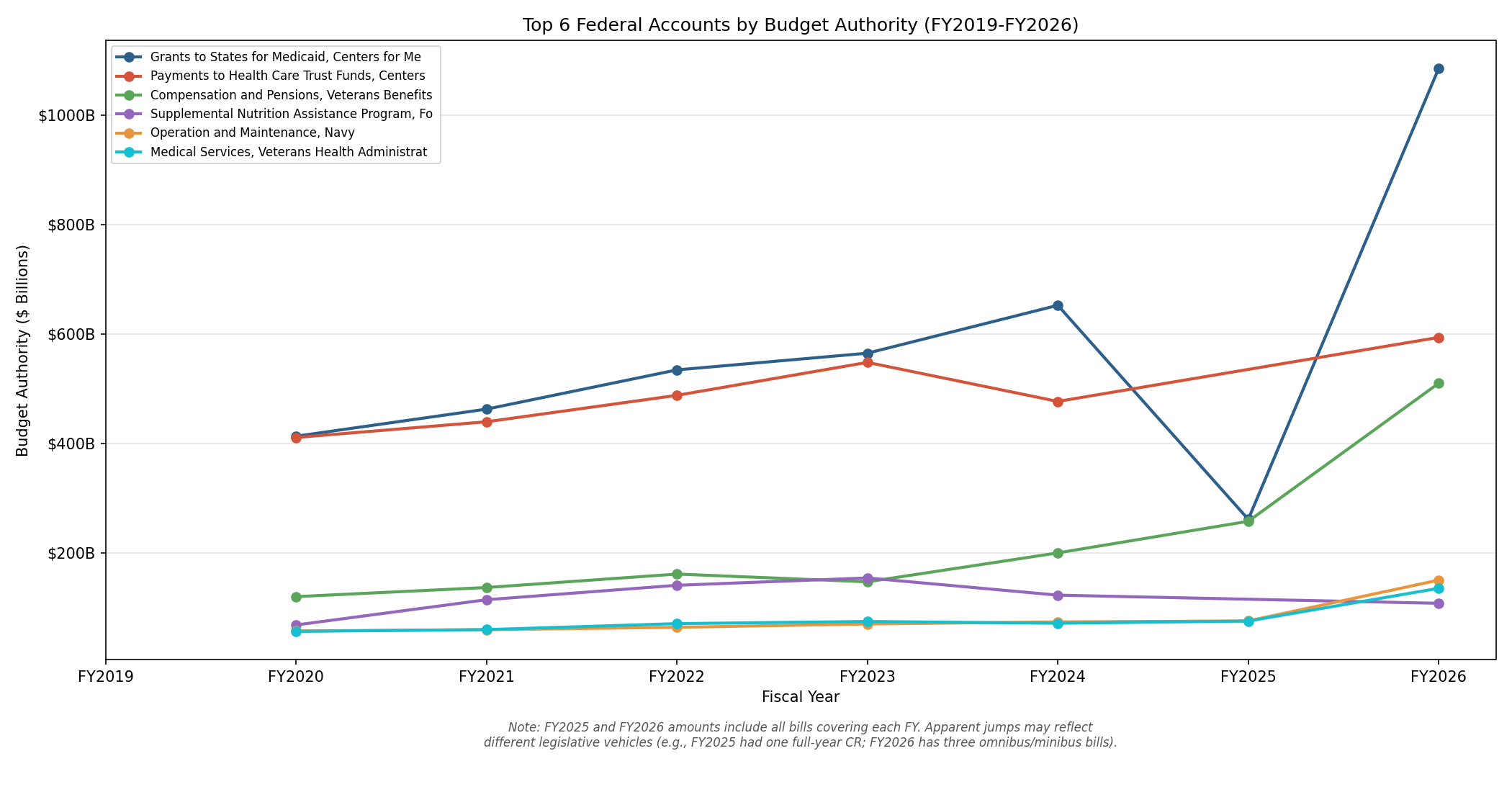

“Trace VA Compensation and Pensions across all fiscal years”

congress-approp relate 118-hr9468:0 --dir data --fy-timeline

Shows every matching provision across FY2024–FY2026 with current/advance/supplemental split, plus deterministic hashes you can save as persistent links for future comparisons.

“Find everything about FEMA disaster relief”

congress-approp search --dir data --semantic "FEMA disaster relief funding" --top 5

Finds FEMA provisions across 5 different bills by meaning, not just keywords — even when the bill text says “Federal Emergency Management Agency—Disaster Relief Fund” instead of “FEMA.”

Key Concepts

enrichgenerates bill metadata offline (no API keys) — enabling fiscal year filtering, subcommittee scoping, and advance appropriation detection.--fy 2026filters any command to bills covering that fiscal year.--subcommittee thudscopes to a specific appropriations jurisdiction, resolving division letters automatically (Division D in one bill, Division F in another — both map to THUD).--show-advanceseparates current-year spending from advance appropriations (money enacted now but available in a future fiscal year). Critical for year-over-year comparisons.relatetraces one provision across all bills with a fiscal year timeline.link suggest/link acceptpersist cross-bill relationships socompare --use-linkscan handle renames automatically.

Navigating This Book

This book is organized so you can jump to whatever fits your needs:

- Recipes & Demos — Worked examples for account tracking, fiscal year comparisons, inflation adjustment, Python/pandas integration, and data export. Interactive visualizations included.

- Getting Started — Install the tool and run your first query in under five minutes. Covers installation and first commands.

- Getting to Know the Tool — Background reading on what this tool does, who it’s for, and a primer on how federal appropriations work if you’re new to the domain.

- Tutorials — Step-by-step walkthroughs for common tasks: finding spending on a topic, comparing bills, tracking programs, exporting data, and more.

- How-To Guides — Task-oriented recipes for specific operations like downloading bills, extracting provisions, and generating embeddings.

- Explanation — Deep dives into how the extraction pipeline, verification, semantic search, provision types, and budget authority calculation work under the hood.

- Reference — Lookup material: CLI commands, JSON field definitions, provision types, environment variables, data directory layout, and the glossary.

- For Contributors — Architecture overview, code map, and guides for adding new provision types, commands, and tests.

Version

This documentation covers congress-approp v6.0.0.

- GitHub: https://github.com/cgorski/congress-appropriations

- crates.io: https://crates.io/crates/congress-appropriations

What This Tool Does

The Problem

Every year, Congress passes appropriations bills authorizing roughly $1.7 trillion in discretionary spending — the money that funds federal agencies, military operations, scientific research, infrastructure, veterans’ benefits, and thousands of other programs. These bills run to approximately 1,500 pages annually, published as XML on Congress.gov.

The text is public, but it’s practically unsearchable at the provision level. If you want to know how much Congress appropriated for a specific program, you have three options:

- Read the bill yourself. The FY2024 omnibus alone is over 1,800 pages of dense legislative text with nested cross-references, “of which” sub-allocations, and provisions scattered across twelve divisions.

- Read CBO cost estimates or committee reports. These are expert summaries, but they aggregate — you get totals by title or account, not individual provisions. They also don’t cover every bill type the same way.

- Search Congress.gov full text. You can find keywords, but you can’t filter by provision type, sort by dollar amount, or compare the same program across bills.

None of these let you ask structured questions like “show me every rescission over $10 million” or “which programs got a different amount in the continuing resolution than in the omnibus” or “find all provisions related to opioid treatment, including ones that don’t use the word ‘opioid.’”

What This Tool Does

congress-approp turns appropriations bill text into structured, queryable, verified data:

- Downloads enrolled bill XML from Congress.gov via its official API — the authoritative, machine-readable source

- Extracts every spending provision into structured JSON using Claude, capturing account names, dollar amounts, agencies, availability periods, provision types, section references, and more

- Verifies every dollar amount against the source text using deterministic string matching — no LLM in the verification loop

- Generates semantic embeddings for meaning-based search, enabling search by meaning rather than exact keywords

- Provides CLI query tools to search, compare, summarize, and audit provisions across any number of extracted bills

The Trust Model

LLM extraction is not infallible. This tool is designed around a simple principle: the LLM extracts once; deterministic code verifies everything.

The verification pipeline runs after extraction and checks every claim the LLM made against the source bill text. No language model is involved in verification — it’s pure string matching with tiered fallback (exact → normalized → spaceless). The result across the included dataset:

| Metric | Result |

|---|---|

| Dollar amounts not found in source | 1 out of 18,584 (99.995%) |

| Source traceability | 100% — every provision has byte-level source spans |

| Raw text byte-identical to source | 94.6% |

| CR substitution pairs verified | 100% |

| Sub-allocations correctly excluded from budget authority | ✓ |

Every extracted dollar amount can be traced back to an exact byte position in the enrolled bill text. The audit command shows this verification breakdown for any set of bills. If a number can’t be verified, it’s flagged — not silently accepted. For the full breakdown, see Accuracy Metrics.

The ~5% of provisions where raw_text isn’t a byte-identical substring are cases where the LLM truncated a very long provision or normalized whitespace. The verify-text command repairs these deterministically — and the dollar amounts in those provisions are still independently verified.

What’s Included

The tool ships with 32 enacted appropriations bills across 4 congresses (116th–119th), covering FY2019 through FY2026. Every major bill type is represented — omnibus, minibus, continuing resolutions, supplementals, and authorizations. See the Recipes & Demos page for the full bill inventory, or run congress-approp summary --dir data to see them all.

Each bill directory includes the source XML, extracted provisions (extraction.json), verification report, extraction metadata, TAS mapping, bill metadata, and pre-computed embeddings. No API keys are required to query this data.

Five Things You Can Do Right Now

All of these work immediately with the included example data — no API keys needed.

1. See budget totals for all included bills:

congress-approp summary --dir data

Shows each bill’s provision count, gross budget authority, rescissions, and net budget authority in a formatted table.

2. Search all appropriations provisions:

congress-approp search --dir data --type appropriation

Lists every appropriation-type provision across all bills with account name, amount, division, and agency.

3. Find FEMA funding:

congress-approp search --dir data --keyword "Federal Emergency Management"

Searches provision text for any mention of FEMA across all bills.

4. See what the continuing resolution changed:

congress-approp search --dir data/118-hr5860 --type cr_substitution

Shows the 13 “anomalies” — programs where the CR set a different funding level instead of continuing at the prior-year rate.

5. Audit verification status:

congress-approp audit --dir data

Displays a detailed verification breakdown for each bill: how many dollar amounts were verified, how many raw text excerpts matched the source, and the completeness coverage metric.

Who This Is For

congress-approp is built for anyone who needs to work with the details of federal appropriations bills — not just the headline numbers, but the individual provisions. This chapter describes five audiences and how each can get the most out of the tool.

Journalists & Policy Researchers

What you’d use this for:

- Fact-checking spending claims. A press release says “Congress cut Program X by 15%.” You can pull up every provision mentioning that program, compare the dollar amounts to the prior year’s bill, and confirm or refute the claim against the enrolled bill text — not a summary or a committee report, but the law itself.

- Comparing spending across fiscal years. “How did THUD funding change from FY2024 to FY2026?” Use

compare --base-fy 2024 --current-fy 2026 --subcommittee thudand get a per-account comparison: Tenant-Based Rental Assistance up $6.1B (+18.7%), Capital Investment Grants down $505M. No need to know which bills or divisions to look at — the tool resolves that automatically. - Finding provisions by topic. You’re writing a story about opioid treatment funding. Semantic search finds relevant provisions even when the bill text says “Substance Use Treatment and Prevention” instead of “opioid.” Combine with

--fy 2026 --subcommittee labor-hhsto scope results to a specific year and jurisdiction. - Separating advance from current-year spending. 79.5% of MilCon-VA budget authority is advance appropriations for the next fiscal year. Without

--show-advance, a reporter comparing year-over-year VA spending would be off by hundreds of billions of dollars. The tool flags this automatically. - Tracing a program across all bills. Use

relate 118-hr9468:0 --fy-timelineto see VA Compensation and Pensions across FY2024–FY2026, with current/advance/supplemental split per year and links to every matching provision.

Start here: Getting Started → Find Spending on a Topic → Compare Two Bills → Enrich Bills with Metadata

API keys needed: None for querying pre-extracted example data (including FY filtering, subcommittee scoping, advance splits, and relate). OPENAI_API_KEY if you want semantic (meaning-based) search. CONGRESS_API_KEY + ANTHROPIC_API_KEY if you want to download and extract additional bills yourself.

Congressional Staffers & Analysts

What you’d use this for:

- Tracking program funding across bills. Use

relateto trace a specific account — say, VA Compensation and Pensions — across all 14 bills with a fiscal year timeline showing the current-year, advance, and supplemental split. Save the matches as persistent links withlink acceptso you can reuse them in future comparisons. - Subcommittee-level analysis. “What’s the FY2026 Defense budget?” Use

summary --fy 2026 --subcommittee defenseand get $836B in budget authority from H.R. 7148 Division A. The tool maps division letters to canonical jurisdictions automatically — Division A means Defense in H.R. 7148 but CJS in H.R. 6938. - Identifying CR anomalies. Continuing resolutions fund the government at prior-year rates except for specific anomalies. The tool extracts every

cr_substitutionas structured data so you can see exactly which programs got different treatment:congress-approp search --dir data/118-hr5860 --type cr_substitution. - Enriched bill classifications. The tool distinguishes omnibus (5+ subcommittees), minibus (2–4), full-year CR with appropriations (like H.R. 1968 with $1.786T in appropriations alongside a CR mechanism), and supplementals — not just the raw LLM classification.

- Exporting for briefings and spreadsheets. Every query command supports

--format csvoutput. Pipe it to a file and open it in Excel:congress-approp compare --base-fy 2024 --current-fy 2026 --subcommittee thud --dir data --format csv > thud_compare.csv.

Start here: Getting Started → Compare Two Bills → Enrich Bills with Metadata → Track a Program Across Bills

API keys needed: None for querying pre-extracted data (including FY filtering, subcommittee scoping, advance splits, relate, and link management). Most staffers won’t need to run extractions themselves — the included example data covers 13 enacted bills across FY2024–FY2026.

Data Scientists & Developers

What you’d use this for:

- Building dashboards and visualizations. The

--format jsonand--format jsonloutput modes give you machine-readable provision data ready for ingestion into dashboards, notebooks, or databases. Every provision includes structured fields for amount, agency, account, division, section, provision type, and more. - Integrating into data pipelines.

congress-appropis both a CLI tool and a Rust library (congress_appropriations). You can call it from scripts via the CLI or embed it directly in Rust projects via the library API. The JSON schema is stable within major versions. - Extending with new provision types or analysis. The extraction schema supports 11 provision types today. If you need to capture something new — say, a specific category of earmark or a new kind of spending limitation — the Adding a New Provision Type guide walks you through it.

Start here: Getting Started → Export Data for Spreadsheets and Scripts → Use the Library API from Rust → Architecture Overview

API keys needed: Depends on your workflow. None for querying existing extractions. OPENAI_API_KEY for generating embeddings (semantic search). CONGRESS_API_KEY + ANTHROPIC_API_KEY for downloading and extracting new bills.

Auditors & Oversight Staff

What you’d use this for:

- Validating extracted numbers. The

auditcommand gives you a per-bill breakdown of verification status: how many dollar amounts were found in the source text, how many raw text excerpts matched byte-for-byte, and a completeness metric showing what percentage of dollar strings in the source were accounted for. Across the included dataset, 99.995% of dollar amounts are verified against the source text. See Accuracy Metrics for the full breakdown. - Assessing extraction completeness. The verification report flags any dollar amount that appears in the source XML but isn’t captured by an extracted provision. A completeness percentage below 100% doesn’t necessarily indicate a missed provision — many dollar strings in bill text are statutory cross-references, loan guarantee ceilings, or old amounts being struck by amendments — but it gives you a starting point for investigation.

- Tracing numbers to source. Every verified dollar amount includes a character position in the source text. Every provision includes

raw_textthat can be matched against the bill XML. You can independently confirm any number the tool reports by opening the source file and checking the indicated position.

Start here: Getting Started → Verify Extraction Accuracy → LLM Reliability and Guardrails

API keys needed: None. All verification and audit operations work entirely offline against already-extracted data.

Contributors

What you’d use this for:

- Adding features. The tool is open source under MIT/Apache-2.0. Whether you want to add a new CLI subcommand, support a new bill format, or improve the extraction prompt, the contributor guides walk you through the codebase and conventions.

- Fixing bugs. The Testing Strategy chapter explains how the test suite is structured — including golden-file tests against the example bills — so you can reproduce issues and verify fixes.

- Understanding the architecture. The Architecture Overview and Code Map chapters explain how the pipeline stages connect, where each module lives, and how data flows from XML download through LLM extraction and verification to query output.

Start here: Architecture Overview → Code Map → Testing Strategy → Style Guide and Conventions

API keys needed: CONGRESS_API_KEY + ANTHROPIC_API_KEY if you’re working on download or extraction features. OPENAI_API_KEY if you’re working on embedding or semantic search features. None if you’re working on query, verification, or CLI features — the example data is sufficient.

How Federal Appropriations Work

This chapter covers the essentials of federal appropriations — fiscal years, bill types, provision structure, and key terminology. Readers already familiar with the appropriations process can skip to the tutorials.

The Federal Budget in 60 Seconds

The U.S. federal government spends roughly $6.7 trillion per year. That breaks down into three major categories:

| Category | Share | What It Covers |

|---|---|---|

| Mandatory spending | ~63% | Social Security, Medicare, Medicaid, SNAP, and other programs where spending is determined by eligibility rules set in permanent law — not annual votes |

| Discretionary spending | ~26% | Everything Congress votes on each year through appropriations bills: defense, veterans’ health care, scientific research, federal law enforcement, national parks, foreign aid, and thousands of other programs |

| Net interest | ~11% | Interest payments on the national debt |

This tool covers the 26% — discretionary spending — plus certain mandatory spending lines that appear as appropriation provisions in the bill text (for example, SNAP funding appears as a line item in the Agriculture appropriations division even though it’s technically mandatory spending). That’s why the budget authority total for H.R. 4366 is ~$846 billion, not the ~$1.7 trillion figure you’ll sometimes see for total discretionary spending (which includes all twelve bills plus defense), and certainly not the ~$6.7 trillion total federal budget.

The Fiscal Year

The federal fiscal year runs from October 1 through September 30. It’s named for the calendar year in which it ends, not the one in which it begins. So:

- FY2024 = October 1, 2023 – September 30, 2024

- FY2025 = October 1, 2024 – September 30, 2025

Bills are labeled by the fiscal year they fund, not the calendar year they were enacted in. The Consolidated Appropriations Act, 2024 (H.R. 4366) was signed into law on March 23, 2024 — nearly six months into the fiscal year it was supposed to fund from the start.

The Twelve Appropriations Bills

Each year, Congress is supposed to pass twelve individual appropriations bills, one for each subcommittee of the House and Senate Appropriations Committees:

- Agriculture, Rural Development, FDA

- Commerce, Justice, Science (CJS)

- Defense

- Energy and Water Development

- Financial Services and General Government

- Homeland Security

- Interior, Environment

- Labor, Health and Human Services, Education (Labor-HHS)

- Legislative Branch

- Military Construction, Veterans Affairs (MilCon-VA)

- State, Foreign Operations

- Transportation, Housing and Urban Development (THUD)

In practice, Congress rarely passes all twelve on time. Instead, it bundles them:

- An omnibus packages all (or nearly all) twelve bills into a single piece of legislation.

- A minibus bundles a few of the twelve together.

- Individual bills are occasionally passed on their own, but this has become increasingly rare.

When none of the twelve are done by October 1, Congress passes a continuing resolution to keep the government funded temporarily while it finishes negotiations.

Bill Types

The included dataset covers 32 enacted appropriations bills spanning all major bill types. Here’s what each one is, with the real example from this tool:

Regular / Omnibus

A regular appropriations bill provides new funding for one of the twelve subcommittee jurisdictions for the coming fiscal year. An omnibus combines multiple regular bills into one legislative vehicle, organized into lettered divisions (Division A, Division B, etc.). H.R. 4366, the Consolidated Appropriations Act, 2024, is an omnibus covering MilCon-VA, Agriculture, CJS, Energy-Water, Interior, THUD, and other matters across multiple divisions. It contains 2,364 provisions and authorizes $846 billion in budget authority.

Continuing Resolution

A continuing resolution (CR) provides temporary funding — usually at the prior fiscal year’s rate — for agencies whose regular appropriations bills haven’t been enacted yet. Most provisions in a CR simply say “continue at last year’s level,” but specific programs may get different treatment through anomalies (formally called CR substitutions). H.R. 5860, the Continuing Appropriations Act, 2024, contains 130 provisions including 13 CR substitutions — programs where Congress set a specific dollar amount rather than defaulting to the prior-year rate. It also includes mandatory spending extensions and other legislative riders.

Supplemental

A supplemental appropriation provides additional funding outside the regular annual cycle, typically in response to emergencies — natural disasters, military operations, public health crises, or (in this case) an unexpected funding shortfall. H.R. 9468, the Veterans Benefits Continuity and Accountability Supplemental Appropriations Act, 2024, contains 7 provisions providing $2.9 billion for VA Compensation and Pensions and Readjustment Benefits, plus reporting requirements and an Inspector General review.

Rescissions

A rescission bill cancels previously enacted budget authority. Rescissions also appear as individual provisions within larger bills — H.R. 4366 includes $24.7 billion in rescissions alongside its new appropriations.

Anatomy of a Provision

To see how bill text becomes structured data, let’s walk through a real example from H.R. 9468. Here’s what Congress wrote:

For an additional amount for ’‘Compensation and Pensions’’, $2,285,513,000, to remain available until expended.

And here is the structured JSON that congress-approp extracted from that sentence:

{

"provision_type": "appropriation",

"agency": "Department of Veterans Affairs",

"account_name": "Compensation and Pensions",

"amount": {

"value": { "kind": "specific", "dollars": 2285513000 },

"semantics": "new_budget_authority",

"text_as_written": "$2,285,513,000"

},

"detail_level": "top_level",

"availability": "to remain available until expended",

"fiscal_year": 2024,

"raw_text": "For an additional amount for ''Compensation and Pensions'', $2,285,513,000, to remain available until expended.",

"confidence": 0.99

}

Here’s what each piece means:

account_name: Pulled from the double-quoted name in the bill text (the''Compensation and Pensions''delimiters are a legislative drafting convention).amount: The dollar value is parsed to an integer (2285513000), the original text is preserved ("$2,285,513,000"), and the meaning is classified — this isnew_budget_authority, meaning Congress is granting new spending authority, not referencing an existing amount.detail_level: This is atop_levelappropriation — the full amount for the account, not a sub-allocation (“of which $X for Y”).availability: Captured from the bill text. “To remain available until expended” means this is no-year money — the agency can spend it over multiple fiscal years, unlike annual funds that expire at the end of the fiscal year.raw_text: The original bill text, verified against the source XML.- Verification: The string

$2,285,513,000was found at character position 431 in the source XML. Theraw_textis a byte-identical substring of the source starting at position 371.

Key Concepts

Budget Authority vs. Outlays

Budget authority (BA) is what Congress authorizes — the legal permission for agencies to enter into obligations (sign contracts, award grants, hire staff). Outlays are what the Treasury actually disburses. The two differ because agencies often obligate funds in one year but spend them over several years (especially for construction, procurement, and multi-year grants).

This tool reports budget authority, because that’s what the bill text specifies. When you see “$846B” for H.R. 4366, that’s the sum of new_budget_authority provisions at the top_level and line_item detail levels — what Congress authorized, not what agencies will spend this year.

Sub-Allocations Are Not Additional Money

Many provisions include “of which” clauses: “For the Office of Science, $8,220,000,000, of which $300,000,000 shall be for fusion energy research.” The $300 million is a sub-allocation — a directive about how to spend part of the $8.2 billion, not money on top of it. The tool captures sub-allocations at detail_level: "sub_allocation" and correctly excludes them from budget authority totals to avoid double-counting.

Advance Appropriations

Sometimes Congress enacts budget authority in this year’s bill but makes it available starting in the next fiscal year. These advance appropriations are included in the bill’s budget authority total (because the bill does enact them) but are noted in the provision’s notes field.

Congress Numbers

Each Congress spans two calendar years. The 118th Congress served from January 2023 through January 2025; the 119th Congress runs from January 2025 through January 2027. Bills are identified by their Congress — H.R. 4366 of the 118th Congress is an entirely different bill from H.R. 4366 of any other Congress. All three example bills in this tool are from the 118th Congress.

Essential Glossary

These five terms come up throughout the book. A comprehensive glossary is available in the Glossary reference chapter.

| Term | Definition |

|---|---|

| Budget authority | The legal authority Congress grants to federal agencies to enter into financial obligations. This is the dollar figure in an appropriation provision — what Congress authorizes, as distinct from what agencies ultimately spend (outlays). |

| Provision | A single identifiable directive in an appropriations bill: an appropriation, a rescission, a spending limitation, a transfer authority, a CR anomaly, a policy rider, or any other discrete instruction. This is the fundamental unit of data in congress-approp. |

| Enrolled | The final text of a bill as passed by both the House and Senate and presented to the President for signature. This is the version congress-approp downloads — the authoritative text that becomes law. |

| Rescission | A provision that cancels previously enacted budget authority. A rescission of $500 million reduces the net budget authority by that amount. In the summary table, rescissions appear in their own column and are subtracted to produce the Net BA figure. |

| Continuing resolution (CR) | Temporary legislation that funds the government at the prior year’s rate for agencies whose regular appropriations bills have not been enacted. Specific exceptions, called anomalies (or CR substitutions), set different funding levels for particular programs. |

Installation

You will need: A computer running macOS or Linux, and an internet connection.

You will learn: How to install

congress-appropand verify it’s working.

Install Rust

congress-approp is written in Rust and requires Rust 1.93 or later. If you don’t have Rust installed, the easiest way is via rustup:

curl --proto '=https' --tlsv1.2 -sSf https://sh.rustup.rs | sh

If you already have Rust, make sure it’s up to date:

rustup update

Verify your version:

rustc --version

# Should show 1.93.0 or later

Install from Source (Recommended)

Cloning the repository gives you the full dataset — 32 enacted appropriations bills (FY2019–FY2026) with pre-computed embeddings, ready to query with no API keys.

git clone https://github.com/cgorski/congress-appropriations.git

cd congress-appropriations

cargo install --path .

This compiles the project and places the congress-approp binary on your PATH. The first build takes a few minutes; subsequent builds are much faster.

Install from crates.io

If you just want the binary without cloning the full repository:

cargo install congress-appropriations

Note: The crates.io package does not include the

data/directory or pre-computed embedding vectors because they exceed the crates.io upload limit. If you install via crates.io, clone the repository separately to get the dataset, or download and extract your own bills.

Verify the Installation

Run the summary command against the included data:

congress-approp summary --dir data

You should see a table listing all 32 bills with their provision counts, budget authority, and rescissions. The last line confirms data integrity:

0 dollar amounts unverified across all bills. Run `congress-approp audit` for detailed verification.

If you see 32 bills and 34,568 total provisions across FY2019–FY2026, everything is working. You’re ready to start querying.

Tip: If you’re running from the cloned repo directory,

datais a relative path that points to the included dataset. If you installed viacargo installand are running from a different directory, provide the full path to thedata/directory inside your clone.

API Keys (Optional)

No API keys are needed to query the pre-extracted dataset. Keys are only required if you want to download new bills, extract provisions from them, or use semantic search:

| Environment Variable | Required For | How to Get It |

|---|---|---|

CONGRESS_API_KEY | Downloading bill XML (download command) | Free — sign up at api.congress.gov |

ANTHROPIC_API_KEY | Extracting provisions (extract command) | Sign up at console.anthropic.com |

OPENAI_API_KEY | Generating embeddings (embed command) and semantic search (search --semantic) | Sign up at platform.openai.com |

Set them in your shell when needed:

export CONGRESS_API_KEY="your-key-here"

export ANTHROPIC_API_KEY="your-key-here"

export OPENAI_API_KEY="your-key-here"

See Environment Variables and API Keys for details.

Rebuilding After Source Changes

If you modify the source code (or pull updates), rebuild and reinstall with:

cargo install --path .

For development iteration without reinstalling:

cargo build --release

./target/release/congress-approp summary --dir data

Next Steps

Next: Your First Query.

Your First Query

You will need:

congress-appropinstalled (Installation), access to thedata/directory from the cloned repository.You will learn: How to explore the included FY2024 appropriations data using five core commands — no API keys required.

This chapter walks through five core commands using the included dataset. Every command shown here produces output you can verify against the data files.

Step 1: See What Bills You Have

Start with the summary command to get an overview:

congress-approp summary --dir data

┌───────────┬───────────────────────┬────────────┬─────────────────┬─────────────────┬─────────────────┐

│ Bill ┆ Classification ┆ Provisions ┆ Budget Auth ($) ┆ Rescissions ($) ┆ Net BA ($) │

╞═══════════╪═══════════════════════╪════════════╪═════════════════╪═════════════════╪═════════════════╡

│ H.R. 4366 ┆ Omnibus ┆ 2364 ┆ 846,137,099,554 ┆ 24,659,349,709 ┆ 821,477,749,845 │

│ H.R. 5860 ┆ Continuing Resolution ┆ 130 ┆ 16,000,000,000 ┆ 0 ┆ 16,000,000,000 │

│ H.R. 9468 ┆ Supplemental ┆ 7 ┆ 2,882,482,000 ┆ 0 ┆ 2,882,482,000 │

│ TOTAL ┆ ┆ 2501 ┆ 865,019,581,554 ┆ 24,659,349,709 ┆ 840,360,231,845 │

└───────────┴───────────────────────┴────────────┴─────────────────┴─────────────────┴─────────────────┘

0 dollar amounts unverified across all bills. Run `congress-approp audit` for detailed verification.

Here’s what each column means:

| Column | Meaning |

|---|---|

| Bill | The bill identifier (e.g., H.R. 4366) |

| Classification | What kind of appropriations bill: Omnibus, Continuing Resolution, or Supplemental |

| Provisions | Total number of provisions extracted from the bill |

| Budget Auth ($) | Sum of all provisions with new_budget_authority semantics — what Congress authorized agencies to spend. Computed from the actual provisions, not from any LLM-generated summary |

| Rescissions ($) | Sum of all rescission provisions — money Congress is taking back from prior appropriations |

| Net BA ($) | Budget Authority minus Rescissions — the net new spending authority |

The footer line — “0 dollar amounts unverified” — tells you that every extracted dollar amount was confirmed to exist in the source bill text. This is the headline trust metric.

Step 2: Search for Provisions

The search command finds provisions matching your criteria. Let’s start broad — all appropriation-type provisions across all bills:

congress-approp search --dir data --type appropriation

This returns a table with hundreds of rows. Let’s narrow it down. Find all provisions mentioning FEMA:

congress-approp search --dir data --keyword "Federal Emergency Management"

┌───┬───────────┬───────────────┬───────────────────────────────────────────────┬────────────────┬──────────┬─────┐

│ $ ┆ Bill ┆ Type ┆ Description / Account ┆ Amount ($) ┆ Section ┆ Div │

╞═══╪═══════════╪═══════════════╪═══════════════════════════════════════════════╪════════════════╪══════════╪═════╡

│ ┆ H.R. 5860 ┆ other ┆ Allows FEMA Disaster Relief Fund to be appor… ┆ — ┆ SEC. 128 ┆ A │

│ ✓ ┆ H.R. 5860 ┆ appropriation ┆ Federal Emergency Management Agency—Disast… ┆ 16,000,000,000 ┆ SEC. 129 ┆ A │

│ ✓ ┆ H.R. 5860 ┆ appropriation ┆ Office of the Inspector General—Operations… ┆ 2,000,000 ┆ SEC. 129 ┆ A │

└───┴───────────┴───────────────┴───────────────────────────────────────────────┴────────────────┴──────────┴─────┘

3 provisions found

$ = Amount status: ✓ found (unique), ≈ found (multiple matches), ✗ not found

Understanding the $ column — the verification status for each provision’s dollar amount:

| Symbol | Meaning |

|---|---|

| ✓ | Dollar amount string found at exactly one position in the source text — highest confidence |

| ≈ | Dollar amount found at multiple positions (common for round numbers like $5,000,000) — amount is correct but can’t be pinned to a unique location |

| ✗ | Dollar amount not found in the source text — needs manual review |

| (blank) | Provision doesn’t carry a dollar amount (riders, directives) |

Now try searching by account name. This matches against the structured account_name field rather than searching the full text:

congress-approp search --dir data --account "Child Nutrition"

┌───┬───────────┬───────────────┬─────────────────────────────────────────────┬────────────────┬─────────┬─────┐

│ $ ┆ Bill ┆ Type ┆ Description / Account ┆ Amount ($) ┆ Section ┆ Div │

╞═══╪═══════════╪═══════════════╪═════════════════════════════════════════════╪════════════════╪═════════╪═════╡

│ ✓ ┆ H.R. 4366 ┆ appropriation ┆ Child Nutrition Programs ┆ 33,266,226,000 ┆ ┆ B │

│ ✓ ┆ H.R. 4366 ┆ appropriation ┆ Child Nutrition Programs ┆ 18,004,000 ┆ ┆ B │

│ ... │

└───┴───────────┴───────────────┴─────────────────────────────────────────────┴────────────────┴─────────┴─────┘

The top result — $33.27 billion for Child Nutrition Programs — is the top-level appropriation. The smaller amounts below it are sub-allocations and reference amounts within the same account.

You can combine filters. For example, find all appropriations over $1 billion in Division A (MilCon-VA):

congress-approp search --dir data/118-hr4366 --type appropriation --division A --min-dollars 1000000000

Step 3: Look at the VA Supplemental

The smallest bill, H.R. 9468, is a good place to see the full picture. It has only 7 provisions:

congress-approp search --dir data/118-hr9468

┌───┬───────────┬───────────────┬───────────────────────────────────────────────┬───────────────┬──────────┬─────┐

│ $ ┆ Bill ┆ Type ┆ Description / Account ┆ Amount ($) ┆ Section ┆ Div │

╞═══╪═══════════╪═══════════════╪═══════════════════════════════════════════════╪═══════════════╪══════════╪═════╡

│ ✓ ┆ H.R. 9468 ┆ appropriation ┆ Compensation and Pensions ┆ 2,285,513,000 ┆ ┆ │

│ ✓ ┆ H.R. 9468 ┆ appropriation ┆ Readjustment Benefits ┆ 596,969,000 ┆ ┆ │

│ ┆ H.R. 9468 ┆ rider ┆ Establishes that each amount appropriated o… ┆ — ┆ SEC. 101 ┆ │

│ ┆ H.R. 9468 ┆ rider ┆ Unless otherwise provided, the additional a… ┆ — ┆ SEC. 102 ┆ │

│ ┆ H.R. 9468 ┆ directive ┆ Requires the Secretary of Veterans Affairs … ┆ — ┆ SEC. 103 ┆ │

│ ┆ H.R. 9468 ┆ directive ┆ Requires the Secretary of Veterans Affairs … ┆ — ┆ SEC. 103 ┆ │

│ ┆ H.R. 9468 ┆ directive ┆ Requires the Inspector General of the Depar… ┆ — ┆ SEC. 104 ┆ │

└───┴───────────┴───────────────┴───────────────────────────────────────────────┴───────────────┴──────────┴─────┘

7 provisions found

This is the complete bill: two appropriations ($2.3B for Comp & Pensions, $597M for Readjustment Benefits), two policy riders (SEC. 101 and 102 establishing that these amounts are additional to regular appropriations), and three directives requiring the VA Secretary and Inspector General to submit reports about the funding shortfall that necessitated this supplemental.

Notice how the two appropriations have ✓ in the dollar column, while the riders and directives show no symbol — they don’t carry dollar amounts, so there’s nothing to verify.

Step 4: See What the CR Changed

Continuing resolutions normally fund agencies at prior-year rates, but specific programs can get different treatment through “anomalies” — formally called CR substitutions. These are provisions that say “substitute $X for $Y,” setting a new level instead of continuing the old one.

congress-approp search --dir data/118-hr5860 --type cr_substitution

┌───┬───────────┬──────────────────────────────────────────┬───────────────┬───────────────┬──────────────┬──────────┬─────┐

│ $ ┆ Bill ┆ Account ┆ New ($) ┆ Old ($) ┆ Delta ($) ┆ Section ┆ Div │

╞═══╪═══════════╪══════════════════════════════════════════╪═══════════════╪═══════════════╪══════════════╪══════════╪═════╡

│ ✓ ┆ H.R. 5860 ┆ Rural Housing Service—Rural Community… ┆ 25,300,000 ┆ 75,300,000 ┆ -50,000,000 ┆ SEC. 101 ┆ A │

│ ✓ ┆ H.R. 5860 ┆ Rural Utilities Service—Rural Water a… ┆ 60,000,000 ┆ 325,000,000 ┆ -265,000,000 ┆ SEC. 101 ┆ A │

│ ✓ ┆ H.R. 5860 ┆ ┆ 122,572,000 ┆ 705,768,000 ┆ -583,196,000 ┆ SEC. 101 ┆ A │

│ ✓ ┆ H.R. 5860 ┆ National Science Foundation—STEM Educ… ┆ 92,000,000 ┆ 217,000,000 ┆ -125,000,000 ┆ SEC. 101 ┆ A │

│ ✓ ┆ H.R. 5860 ┆ National Oceanic and Atmospheric Admini… ┆ 42,000,000 ┆ 62,000,000 ┆ -20,000,000 ┆ SEC. 101 ┆ A │

│ ✓ ┆ H.R. 5860 ┆ National Science Foundation—Research … ┆ 608,162,000 ┆ 818,162,000 ┆ -210,000,000 ┆ SEC. 101 ┆ A │

│ ✓ ┆ H.R. 5860 ┆ Department of State—Administration of… ┆ 87,054,000 ┆ 147,054,000 ┆ -60,000,000 ┆ SEC. 101 ┆ A │

│ ✓ ┆ H.R. 5860 ┆ Bilateral Economic Assistance—Funds A… ┆ 637,902,000 ┆ 937,902,000 ┆ -300,000,000 ┆ SEC. 101 ┆ A │

│ ✓ ┆ H.R. 5860 ┆ Bilateral Economic Assistance—Departm… ┆ 915,048,000 ┆ 1,535,048,000 ┆ -620,000,000 ┆ SEC. 101 ┆ A │

│ ✓ ┆ H.R. 5860 ┆ International Security Assistance—Dep… ┆ 74,996,000 ┆ 374,996,000 ┆ -300,000,000 ┆ SEC. 101 ┆ A │

│ ✓ ┆ H.R. 5860 ┆ Office of Personnel Management—Salari… ┆ 219,076,000 ┆ 190,784,000 ┆ +28,292,000 ┆ SEC. 126 ┆ A │

│ ✓ ┆ H.R. 5860 ┆ Department of Transportation—Federal … ┆ 617,000,000 ┆ 570,000,000 ┆ +47,000,000 ┆ SEC. 137 ┆ A │

│ ✓ ┆ H.R. 5860 ┆ Department of Transportation—Federal … ┆ 2,174,200,000 ┆ 2,221,200,000 ┆ -47,000,000 ┆ SEC. 137 ┆ A │

└───┴───────────┴──────────────────────────────────────────┴───────────────┴───────────────┴──────────────┴──────────┴─────┘

13 provisions found

Notice how the table automatically changes shape for CR substitutions — it shows New, Old, and Delta columns instead of a single Amount. This tells you exactly which programs Congress funded above or below the prior-year rate:

- Most programs were cut: Migration and Refugee Assistance lost $620 million (-40.4%), NSF Research lost $210 million (-25.7%)

- Two programs increased: OPM Salaries and Expenses gained $28 million (+14.8%) and FAA Facilities and Equipment gained $47 million (+8.2%)

- Every dollar amount has ✓ — both the new and old amounts were verified in the source text

Step 5: Check Data Quality

The audit command shows how well the extraction held up against the source text:

congress-approp audit --dir data

┌───────────┬────────────┬──────────┬──────────┬───────┬───────┬──────────┬───────────┬──────────┬──────────┐

│ Bill ┆ Provisions ┆ Verified ┆ NotFound ┆ Ambig ┆ Exact ┆ NormText ┆ Spaceless ┆ TextMiss ┆ Coverage │

╞═══════════╪════════════╪══════════╪══════════╪═══════╪═══════╪══════════╪═══════════╪══════════╪══════════╡

│ H.R. 4366 ┆ 2364 ┆ 762 ┆ 0 ┆ 723 ┆ 2285 ┆ 59 ┆ 0 ┆ 20 ┆ 94.2% │

│ H.R. 5860 ┆ 130 ┆ 33 ┆ 0 ┆ 2 ┆ 102 ┆ 12 ┆ 0 ┆ 16 ┆ 61.1% │

│ H.R. 9468 ┆ 7 ┆ 2 ┆ 0 ┆ 0 ┆ 5 ┆ 0 ┆ 0 ┆ 2 ┆ 100.0% │

│ TOTAL ┆ 2501 ┆ 797 ┆ 0 ┆ 725 ┆ 2392 ┆ 71 ┆ 0 ┆ 38 ┆ │

└───────────┴────────────┴──────────┴──────────┴───────┴───────┴──────────┴───────────┴──────────┴──────────┘

The key number: NotFound = 0 for every bill. Every dollar amount the tool extracted actually exists in the source bill text. Here’s a quick guide to the other columns:

| Column | What It Means | Good Value |

|---|---|---|

| Verified | Dollar amount found at exactly one position in source | Higher is better |

| NotFound | Dollar amounts NOT found in source | Should be 0 |

| Ambig | Dollar amount found at multiple positions (e.g., “$5,000,000” appears 50 times) | Not a problem — amount is correct |

| Exact | raw_text excerpt is byte-identical to source | Higher is better |

| NormText | raw_text matches after whitespace/quote normalization | Minor formatting difference |

| TextMiss | raw_text not found at any matching tier | Review manually |

| Coverage | Percentage of dollar strings in source text matched to a provision | 100% is ideal, <100% is often fine |

For a deeper dive into what these numbers mean, see Verify Extraction Accuracy and What Coverage Means.

Step 6: Export to JSON

Every command supports --format json for machine-readable output. This is useful for piping to jq, loading into Python, or just seeing the full data:

congress-approp search --dir data/118-hr9468 --type appropriation --format json

[

{

"account_name": "Compensation and Pensions",

"agency": "Department of Veterans Affairs",

"amount_status": "found",

"bill": "H.R. 9468",

"description": "Compensation and Pensions",

"division": "",

"dollars": 2285513000,

"match_tier": "exact",

"old_dollars": null,

"provision_index": 0,

"provision_type": "appropriation",

"quality": "strong",

"raw_text": "For an additional amount for ''Compensation and Pensions'', $2,285,513,000, to remain available until expended.",

"section": "",

"semantics": "new_budget_authority"

},

{

"account_name": "Readjustment Benefits",

"agency": "Department of Veterans Affairs",

"amount_status": "found",

"bill": "H.R. 9468",

"description": "Readjustment Benefits",

"division": "",

"dollars": 596969000,

"match_tier": "exact",

"old_dollars": null,

"provision_index": 1,

"provision_type": "appropriation",

"quality": "strong",

"raw_text": "For an additional amount for ''Readjustment Benefits'', $596,969,000, to remain available until expended.",

"section": "",

"semantics": "new_budget_authority"

}

]

The JSON output includes every field for each provision — more detail than the table can show. Key fields to know:

dollars: The dollar amount as an integer (no formatting)semantics: What the amount means —new_budget_authoritycounts toward budget totalsraw_text: The verbatim excerpt from the bill textmatch_tier: How closelyraw_textmatched the source —exactmeans byte-identicalquality: Overall quality assessment —strong,moderate, orweakprovision_index: Position in the bill’s provision list (useful for--similarsearches)

Other output formats are also available: --format csv for spreadsheets, --format jsonl for streaming one-object-per-line output. See Output Formats for details.

Enrich for Fiscal Year and Subcommittee Filtering

The example data includes pre-enriched metadata, but if you extract your own bills, run enrich to enable fiscal year and subcommittee filtering:

congress-approp enrich --dir data # No API key needed — runs offline

Once enriched, you can scope any command to a specific fiscal year and subcommittee:

# FY2026 THUD subcommittee only

congress-approp summary --dir data --fy 2026 --subcommittee thud

# See advance vs current-year spending

congress-approp summary --dir data --fy 2026 --subcommittee milcon-va --show-advance

# Compare THUD across fiscal years

congress-approp compare --base-fy 2024 --current-fy 2026 --subcommittee thud --dir data

# Trace one provision across all bills

congress-approp relate 118-hr9468:0 --dir data --fy-timeline

See Enrich Bills with Metadata for the full guide.

What’s Next

Related chapters:

- Want to filter by fiscal year or subcommittee? → Enrich Bills with Metadata

- Want to find specific spending? → Find How Much Congress Spent on a Topic

- Want to compare bills across fiscal years? → Compare Two Bills

- Want to track a program across all bills? → Track a Program Across Bills

- Want to export data to Excel or Python? → Export Data for Spreadsheets and Scripts

- Want to understand the output better? → Understanding the Output (next chapter)

- Want to extract your own bills? → Extract Your Own Bill

- Want to search by meaning instead of keywords? → Use Semantic Search

Understanding the Output

You will need:

congress-appropinstalled, access to thedata/directory.You will learn: How to read every table the tool produces — what each column means, what the symbols indicate, and how to interpret the numbers.

Before diving into tutorials and specific tasks, let’s build a solid understanding of the output formats you’ll encounter. Every command in congress-approp uses consistent conventions, but the tables adapt their shape depending on what you’re looking at.

The Summary Table

The summary command gives you the bird’s-eye view:

congress-approp summary --dir data

┌───────────┬───────────────────────┬────────────┬─────────────────┬─────────────────┬─────────────────┐

│ Bill ┆ Classification ┆ Provisions ┆ Budget Auth ($) ┆ Rescissions ($) ┆ Net BA ($) │

╞═══════════╪═══════════════════════╪════════════╪═════════════════╪═════════════════╪═════════════════╡

│ H.R. 4366 ┆ Omnibus ┆ 2364 ┆ 846,137,099,554 ┆ 24,659,349,709 ┆ 821,477,749,845 │

│ H.R. 5860 ┆ Continuing Resolution ┆ 130 ┆ 16,000,000,000 ┆ 0 ┆ 16,000,000,000 │

│ H.R. 9468 ┆ Supplemental ┆ 7 ┆ 2,882,482,000 ┆ 0 ┆ 2,882,482,000 │

│ TOTAL ┆ ┆ 2501 ┆ 865,019,581,554 ┆ 24,659,349,709 ┆ 840,360,231,845 │

└───────────┴───────────────────────┴────────────┴─────────────────┴─────────────────┴─────────────────┘

0 dollar amounts unverified across all bills. Run `congress-approp audit` for detailed verification.

Column-by-column

| Column | What It Shows |

|---|---|

| Bill | The bill identifier as printed in the legislation (e.g., “H.R. 4366”). The TOTAL row sums across all loaded bills. |

| Classification | The type of appropriations bill: Omnibus, Continuing Resolution, Supplemental, Regular, Minibus, or Rescissions. |

| Provisions | The total count of extracted provisions of all types — appropriations, rescissions, riders, directives, and everything else. |

| Budget Auth ($) | The sum of all provisions where the amount semantics is new_budget_authority and the detail level is top_level or line_item. Sub-allocations and proviso amounts are excluded to prevent double-counting. This number is computed from individual provisions, never from an LLM-generated summary. |

| Rescissions ($) | The absolute value sum of all provisions of type rescission with rescission semantics. This is money Congress is canceling from prior appropriations. |

| Net BA ($) | Budget Authority minus Rescissions. This is the net new spending authority enacted by the bill. For most reporting purposes, Net BA is the number you want. |

The footer

The line below the table — “0 dollar amounts unverified across all bills” — is a quick trust check. It counts provisions across all loaded bills where the dollar amount string was not found in the source bill text. Zero means every extracted number was confirmed against the source. If this number is ever greater than zero, the audit command will show you exactly which provisions need review.

By-agency view

Add --by-agency to see budget authority broken down by parent department:

congress-approp summary --dir data --by-agency

This appends a second table showing every agency, its total budget authority, rescissions, and provision count, sorted by budget authority descending. For example, Department of Veterans Affairs shows ~$343B (which includes mandatory programs like Compensation and Pensions that appear as appropriation lines in the bill text).

The Search Table

The search command produces tables that adapt their columns based on what you’re searching for. This is one of the most important things to understand about the output.

Standard search table

For most searches, you see this layout:

congress-approp search --dir data/118-hr9468

┌───┬───────────┬───────────────┬───────────────────────────────────────────────┬───────────────┬──────────┬─────┐

│ $ ┆ Bill ┆ Type ┆ Description / Account ┆ Amount ($) ┆ Section ┆ Div │

╞═══╪═══════════╪═══════════════╪═══════════════════════════════════════════════╪═══════════════╪══════════╪═════╡

│ ✓ ┆ H.R. 9468 ┆ appropriation ┆ Compensation and Pensions ┆ 2,285,513,000 ┆ ┆ │

│ ✓ ┆ H.R. 9468 ┆ appropriation ┆ Readjustment Benefits ┆ 596,969,000 ┆ ┆ │

│ ┆ H.R. 9468 ┆ rider ┆ Establishes that each amount appropriated o… ┆ — ┆ SEC. 101 ┆ │

│ ┆ H.R. 9468 ┆ rider ┆ Unless otherwise provided, the additional a… ┆ — ┆ SEC. 102 ┆ │

│ ┆ H.R. 9468 ┆ directive ┆ Requires the Secretary of Veterans Affairs … ┆ — ┆ SEC. 103 ┆ │

│ ┆ H.R. 9468 ┆ directive ┆ Requires the Secretary of Veterans Affairs … ┆ — ┆ SEC. 103 ┆ │

│ ┆ H.R. 9468 ┆ directive ┆ Requires the Inspector General of the Depar… ┆ — ┆ SEC. 104 ┆ │

└───┴───────────┴───────────────┴───────────────────────────────────────────────┴───────────────┴──────────┴─────┘

7 provisions found

| Column | What It Shows |

|---|---|

| $ | Verification status of the dollar amount (see symbols table below) |

| Bill | Which bill this provision comes from |

| Type | The provision type: appropriation, rescission, rider, directive, limitation, transfer_authority, cr_substitution, mandatory_spending_extension, directed_spending, continuing_resolution_baseline, or other |

| Description / Account | The account name for appropriations and rescissions, or a description for other provision types. Long text is truncated with … |

| Amount ($) | The dollar amount. Shows — for provisions without a dollar value (riders, directives). |

| Section | The section reference from the bill text (e.g., “SEC. 101”). Empty if the provision appears under a heading without a section number. |

| Div | The division letter for omnibus bills (e.g., “A” for MilCon-VA in H.R. 4366). Empty for bills without divisions. |

The $ column — verification symbols

The leftmost column tells you the verification status of each provision’s dollar amount:

| Symbol | Meaning | Should You Worry? |

|---|---|---|

| ✓ | The exact dollar string (e.g., $2,285,513,000) was found at one unique position in the source bill text. | No — this is the best result. |

| ≈ | The dollar string was found at multiple positions in the source text. The amount is correct, but it can’t be pinned to a single location. | No — very common for round numbers like $5,000,000 which may appear 50 times in an omnibus. |

| ✗ | The dollar string was not found in the source text. | Yes — this provision needs manual review. Across the included dataset, this occurs only once in 18,584 dollar amounts (99.995%). |

| (blank) | The provision doesn’t carry a dollar amount (riders, directives, some policy provisions). | No — nothing to verify. |

CR substitution table

When you search for cr_substitution type provisions, the table automatically changes shape to show the old and new amounts:

congress-approp search --dir data/118-hr5860 --type cr_substitution

┌───┬───────────┬──────────────────────────────────────────┬───────────────┬───────────────┬──────────────┬──────────┬─────┐

│ $ ┆ Bill ┆ Account ┆ New ($) ┆ Old ($) ┆ Delta ($) ┆ Section ┆ Div │

╞═══╪═══════════╪══════════════════════════════════════════╪═══════════════╪═══════════════╪══════════════╪══════════╪═════╡

│ ✓ ┆ H.R. 5860 ┆ Rural Housing Service—Rural Community… ┆ 25,300,000 ┆ 75,300,000 ┆ -50,000,000 ┆ SEC. 101 ┆ A │

│ ... │

│ ✓ ┆ H.R. 5860 ┆ Office of Personnel Management—Salari… ┆ 219,076,000 ┆ 190,784,000 ┆ +28,292,000 ┆ SEC. 126 ┆ A │

└───┴───────────┴──────────────────────────────────────────┴───────────────┴───────────────┴──────────────┴──────────┴─────┘

13 provisions found

Instead of a single Amount column, you get:

| Column | Meaning |

|---|---|

| New ($) | The new dollar amount the CR substitutes in |

| Old ($) | The old dollar amount being replaced |

| Delta ($) | New minus Old. Negative means a cut, positive means an increase |

Semantic search table

When you use --semantic or --similar, a Sim (similarity) column appears at the left:

┌──────┬───────────┬───────────────┬───────────────────────────────────────┬────────────────┬─────┐

│ Sim ┆ Bill ┆ Type ┆ Description / Account ┆ Amount ($) ┆ Div │

╞══════╪═══════════╪═══════════════╪═══════════════════════════════════════╪════════════════╪═════╡

│ 0.51 ┆ H.R. 4366 ┆ appropriation ┆ Child Nutrition Programs ┆ 33,266,226,000 ┆ B │

│ 0.46 ┆ H.R. 4366 ┆ appropriation ┆ Child Nutrition Programs ┆ 10,000,000 ┆ B │

└──────┴───────────┴───────────────┴───────────────────────────────────────┴────────────────┴─────┘

The Sim score is the cosine similarity between your query and the provision’s embedding vector, ranging from 0 to 1:

| Score Range | Interpretation |

|---|---|

| > 0.80 | Almost certainly the same program (when comparing across bills) |

| 0.60 – 0.80 | Related topic, same policy area |

| 0.45 – 0.60 | Loosely related |

| < 0.45 | Probably not meaningfully related |

Results are sorted by similarity descending and limited to --top N (default 20).

The Audit Table

The audit command provides the most detailed quality view:

congress-approp audit --dir data

┌───────────┬────────────┬──────────┬──────────┬───────┬───────┬──────────┬───────────┬──────────┬──────────┐

│ Bill ┆ Provisions ┆ Verified ┆ NotFound ┆ Ambig ┆ Exact ┆ NormText ┆ Spaceless ┆ TextMiss ┆ Coverage │

╞═══════════╪════════════╪══════════╪══════════╪═══════╪═══════╪══════════╪═══════════╪══════════╪══════════╡

│ H.R. 4366 ┆ 2364 ┆ 762 ┆ 0 ┆ 723 ┆ 2285 ┆ 59 ┆ 0 ┆ 20 ┆ 94.2% │

│ H.R. 5860 ┆ 130 ┆ 33 ┆ 0 ┆ 2 ┆ 102 ┆ 12 ┆ 0 ┆ 16 ┆ 61.1% │

│ H.R. 9468 ┆ 7 ┆ 2 ┆ 0 ┆ 0 ┆ 5 ┆ 0 ┆ 0 ┆ 2 ┆ 100.0% │

│ TOTAL ┆ 2501 ┆ 797 ┆ 0 ┆ 725 ┆ 2392 ┆ 71 ┆ 0 ┆ 38 ┆ │

└───────────┴────────────┴──────────┴──────────┴───────┴───────┴──────────┴───────────┴──────────┴──────────┘

The audit table has two groups of columns: amount verification (left side) and text verification (right side).

Amount verification columns

These check whether the dollar amount string (e.g., "$2,285,513,000") exists in the source bill text:

| Column | What It Counts | Ideal Value |

|---|---|---|

| Verified | Provisions whose dollar string was found at exactly one position in the source | Higher is better |

| NotFound | Provisions whose dollar string was not found anywhere in the source text | Must be 0 — any value above 0 means you should investigate |

| Ambig | Provisions whose dollar string was found at multiple positions (ambiguous location but correct amount) | Not a problem — common for round numbers |

The sum of Verified + Ambig equals the total number of provisions that have dollar amounts. NotFound should always be zero. Across the included example data, it is.

Text verification columns

These check whether the raw_text excerpt (the first ~150 characters of the bill language for each provision) is a substring of the source text:

| Column | Match Method | What It Means |

|---|---|---|

| Exact | Byte-identical substring match | The raw text was copied verbatim from the source — best case. 95.5% of provisions across the 13-bill dataset. |

| NormText | Matches after normalizing whitespace, curly quotes (" → "), and em-dashes (— → -) | Minor formatting differences from XML-to-text conversion. Content is correct. |

| Spaceless | Matches only after removing all spaces | Catches word-joining artifacts. Zero occurrences in the example data. |

| TextMiss | Not found at any matching tier | The raw text may be paraphrased or truncated. In the example data, all 38 TextMiss cases are non-dollar provisions (statutory amendments) where the LLM slightly reformatted section references. |

Coverage column

Coverage is the percentage of all dollar-sign patterns found in the source bill text that were matched to an extracted provision. This measures completeness, not accuracy.

- 100% (H.R. 9468): Every dollar amount in the source was captured — perfect.

- 94.2% (H.R. 4366): Most dollar amounts were captured. The remaining 5.8% are typically statutory cross-references, loan guarantee ceilings, or old amounts being struck by amendments — dollar figures that appear in the text but aren’t independent provisions.

- 61.1% (H.R. 5860): Lower coverage is expected for continuing resolutions because most of the bill text consists of references to prior-year appropriations acts, which contain many dollar amounts that are contextual references, not new provisions.

Coverage below 100% does not mean the extracted numbers are wrong. It means the bill text contains dollar strings that aren’t captured as provisions. See What Coverage Means (and Doesn’t) for a detailed explanation.

Quick decision guide

After running audit, here’s how to interpret the results:

| Situation | Interpretation | Action |

|---|---|---|

| NotFound = 0, Coverage ≥ 90% | Excellent — all extracted amounts verified, high completeness | Use with confidence |

| NotFound = 0, Coverage 60–90% | Good — all extracted amounts verified, some dollar strings in source uncaptured | Fine for most purposes; check unaccounted amounts if completeness matters |

| NotFound = 0, Coverage < 60% | Amounts are correct but extraction may be incomplete | Consider re-extracting; review with audit --verbose |

| NotFound > 0 | Some amounts need review | Run audit --verbose to see which provisions failed; verify manually against the source XML |

The Compare Table

The compare command shows account-level differences between two sets of bills:

congress-approp compare --base data/118-hr4366 --current data/118-hr9468

┌─────────────────────────────────────┬──────────────────────┬─────────────────┬───────────────┬──────────────────┬─────────┬──────────────┐

│ Account ┆ Agency ┆ Base ($) ┆ Current ($) ┆ Delta ($) ┆ Δ % ┆ Status │

╞═════════════════════════════════════╪══════════════════════╪═════════════════╪═══════════════╪══════════════════╪═════════╪══════════════╡

│ Compensation and Pensions ┆ Department of Veter… ┆ 197,382,903,000 ┆ 2,285,513,000 ┆ -195,097,390,000 ┆ -98.8% ┆ changed │

│ Readjustment Benefits ┆ Department of Veter… ┆ 13,774,657,000 ┆ 596,969,000 ┆ -13,177,688,000 ┆ -95.7% ┆ changed │

│ ... │

│ Supplemental Nutrition Assistance … ┆ Department of Agric… ┆ 122,382,521,000 ┆ 0 ┆ -122,382,521,000 ┆ -100.0% ┆ only in base │

└─────────────────────────────────────┴──────────────────────┴─────────────────┴───────────────┴──────────────────┴─────────┴──────────────┘

| Column | Meaning |

|---|---|

| Account | The account name, matched between bills |

| Agency | The parent agency or department |

| Base ($) | Total budget authority for this account in the --base bills |

| Current ($) | Total budget authority in the --current bills |

| Delta ($) | Current minus Base |

| Δ % | Percentage change |

| Status | changed (in both, different amounts), unchanged (in both, same amount), only in base (not in current), or only in current (not in base) |

Results are sorted by the absolute value of Delta, largest changes first.

Interpreting cross-type comparisons: When comparing an omnibus to a supplemental (as above), most accounts will show “only in base” because the supplemental only touches a few accounts. The tool warns you about this: “Comparing Omnibus to Supplemental. Accounts in one but not the other may be expected.” The compare command is most informative when comparing bills of the same type — for example, an FY2023 omnibus to an FY2024 omnibus.

Output Formats

Every query command supports four output formats via --format:

Table (default)

congress-approp search --dir data/118-hr9468 --format table

Human-readable formatted table. Best for interactive use and quick exploration. Column widths adapt to content. Long text is truncated.

JSON

congress-approp search --dir data/118-hr9468 --format json

A JSON array of objects. Includes every field for each matching provision — more data than the table shows. Best for programmatic consumption, piping to jq, or loading into scripts.

JSONL (JSON Lines)

congress-approp search --dir data/118-hr9468 --format jsonl

One JSON object per line, no enclosing array. Best for streaming processing, piping to while read, or working with very large result sets. Each line is independently parseable.

CSV

congress-approp search --dir data/118-hr9468 --format csv > provisions.csv

Comma-separated values suitable for importing into Excel, Google Sheets, R, or pandas. Includes a header row. Dollar amounts are plain integers (not formatted with commas).

Tip: When exporting to CSV for Excel, make sure to import the file with UTF-8 encoding. Some bill text contains em-dashes (—) and other Unicode characters that may display incorrectly with the default Windows encoding.

For a detailed guide with examples and recipes for each format, see Output Formats.

Provision Types at a Glance

You’ll encounter these provision types throughout the tool. Use --list-types for a quick reference:

congress-approp search --dir data --list-types

Available provision types:

appropriation Budget authority grant

rescission Cancellation of prior budget authority

cr_substitution CR anomaly (substituting $X for $Y)

transfer_authority Permission to move funds between accounts

limitation Cap or prohibition on spending

directed_spending Earmark / community project funding

mandatory_spending_extension Amendment to authorizing statute

directive Reporting requirement or instruction

rider Policy provision (no direct spending)

continuing_resolution_baseline Core CR funding mechanism

other Unclassified provisions

The distribution varies by bill type. In the FY2024 omnibus (H.R. 4366), the breakdown is:

| Type | Count | What These Are |

|---|---|---|

appropriation | 1,216 | Grant of budget authority — the core spending provisions |

limitation | 456 | Caps and prohibitions (“not more than”, “none of the funds”) |

rider | 285 | Policy provisions that don’t directly spend or limit money |

directive | 120 | Reporting requirements and instructions to agencies |

other | 84 | Provisions that don’t fit neatly into the standard types |

rescission | 78 | Cancellations of previously appropriated funds |

transfer_authority | 77 | Permission to move funds between accounts |

mandatory_spending_extension | 40 | Amendments to authorizing statutes |

directed_spending | 8 | Earmarks and community project funding |

The continuing resolution (H.R. 5860) has a very different profile: 49 riders, 44 mandatory spending extensions, 13 CR substitutions, and only 5 standalone appropriations. This reflects the CR’s structure — it mostly continues prior-year funding rather than setting new levels.

For detailed documentation of each provision type including all fields and real examples, see Provision Types.

Enriched Output

When you run congress-approp enrich --dir data (no API key needed), the tool generates bill metadata that enhances the output:

- Enriched classifications — the summary table shows “Full-Year CR with Appropriations” instead of “Continuing Resolution” for hybrid bills like H.R. 1968, and “Minibus” instead of “Omnibus” for bills covering only 2–4 subcommittees.

- Advance appropriation split — use

--show-advanceonsummaryto separate current-year spending from advance appropriations (money enacted now but available in a future fiscal year). This is critical for VA accounts where 79.5% of MilCon-VA budget authority is advance. - Fiscal year and subcommittee filtering — use

--fy 2026and--subcommittee thudto scope any command to a specific year and jurisdiction, automatically resolving division letters across bills.

See Enrich Bills with Metadata for the full guide.

Next Steps

Related chapters:

- Enrich Bills with Metadata — enable FY filtering, subcommittee scoping, and advance splits

- Find How Much Congress Spent on a Topic — your first real research task

- Compare Two Bills — see what changed between bills

- Track a Program Across Bills — trace one account across fiscal years

- Filter and Search Provisions — all the search flags in one place

Recipes & Demos

Worked examples using the included 32-bill dataset (data/). All commands run locally against the pre-extracted data with no API keys unless noted. Semantic search requires OPENAI_API_KEY.

The book/cookbook/cookbook.py script reproduces all CSVs, charts, and JSON shown on this page. See Run All Demos Yourself at the bottom.

Dataset Overview

| 116th Congress (2019–2021) | 11 bills — FY2019, FY2020, FY2021 |

| 117th Congress (2021–2023) | 7 bills — FY2021, FY2022, FY2023 |

| 118th Congress (2023–2025) | 10 bills — FY2024, FY2025 |

| 119th Congress (2025–2027) | 4 bills — FY2025, FY2026 |

| Total | 32 bills, 34,568 provisions, $21.5 trillion in budget authority |

| Accounts tracked | 1,051 unique Federal Account Symbols across 937 cross-bill links |

| Source traceability | 100% — every provision has exact byte positions in the enrolled bill |

| Dollar verification | 99.995% — 18,583 of 18,584 dollar amounts confirmed in source text |

Subcommittee coverage by fiscal year

The --subcommittee filter requires bills with separate divisions per jurisdiction. FY2025 was funded through H.R. 1968, a full-year continuing resolution that wraps all 12 subcommittees into a single division — so --subcommittee cannot break it apart. Use trace or search --fy 2025 to access FY2025 data by account.

| Fiscal Year | Subcommittee filter | Notes |

|---|---|---|

| FY2019 | Partial | Only supplemental and disaster relief bills |

| FY2020–FY2024 | ✅ Full | Traditional omnibus/minibus bills with per-subcommittee divisions |

| FY2025 | ❌ Not available | Funded via full-year CR (H.R. 1968) — all jurisdictions in one division |

| FY2026 | ✅ Full | Three bills cover all 12 subcommittees |

Quick Reference

# Track any federal account across all fiscal years (by FAS code or name search)

congress-approp trace "child nutrition" --dir data

# Budget totals for FY2026

congress-approp summary --dir data --fy 2026

# Find FEMA provisions across all bills covering FY2026

congress-approp search --dir data --keyword "Federal Emergency Management" --fy 2026

# Compare THUD funding FY2024 → FY2026 with inflation adjustment

congress-approp compare --base-fy 2024 --current-fy 2026 --subcommittee thud --dir data --use-authorities --real

# Verification quality across all 32 bills

congress-approp audit --dir data

Searching and Tracking Accounts

Keyword search

The --keyword flag searches the raw_text field — the verbatim bill language stored with each provision. It is case-insensitive. Combine with --type to filter by provision type, --fy by fiscal year, --agency by department, or --min-dollars / --max-dollars for dollar ranges. All filters are ANDed.

congress-approp search --dir data --keyword "veterans" --type appropriation

┌───┬───────────┬───────────────┬───────────────────────────────────────────────┬─────────────────┬─────────┬─────┐

│ $ ┆ Bill ┆ Type ┆ Description / Account ┆ Amount ($) ┆ Section ┆ Div │

╞═══╪═══════════╪═══════════════╪═══════════════════════════════════════════════╪═════════════════╪═════════╪═════╡

│ ✓ ┆ H.R. 133 ┆ appropriation ┆ Compensation and Pensions ┆ 6,110,251,552 ┆ ┆ J │

│ ✓ ┆ H.R. 133 ┆ appropriation ┆ Readjustment Benefits ┆ 14,946,618,000 ┆ ┆ J │

│ ✓ ┆ H.R. 133 ┆ appropriation ┆ General Operating Expenses, Veterans Benefit… ┆ 3,180,000,000 ┆ ┆ J │

│ ... │

Column reference:

| Column | Meaning |

|---|---|

| $ | Dollar amount verification status. ✓ = dollar string found at one unique position in the enrolled bill text. ≈ = found at multiple positions (common for round numbers) — correct but location ambiguous. ✗ = not found in source — needs review. Blank = provision has no dollar amount. |

| Bill | The enacted legislation this provision comes from |

| Type | Provision classification: appropriation (grant of budget authority), rescission (cancellation of prior funds), transfer_authority (permission to move funds), rider (policy provision, no spending), directive (reporting requirement), limitation (spending cap), cr_substitution (CR anomaly replacing one dollar amount with another), and others |

| Description / Account | Account name (for appropriations, rescissions) or description text (for riders, directives). This is the name as written in the bill text, between '' delimiters. |

| Amount ($) | Budget authority in dollars. — = provision carries no dollar value. |

| Section | Section reference in the bill (e.g., SEC. 1701). Empty if no numbered section. |

| Div | Division letter for omnibus/minibus bills. Division letters are bill-internal — Division A means different things in different bills. |

Tracking an account across fiscal years

The trace command follows a single federal account across every bill in the dataset using its Federal Account Symbol (FAS code) — a government-assigned identifier that persists through name changes and reorganizations.

Finding the FAS code by name:

congress-approp trace "child nutrition" --dir data

If the name matches multiple accounts, the tool lists them with their FAS codes. Use the code for the specific account:

congress-approp trace 012-3539 --dir data

TAS 012-3539: Child Nutrition Programs, Food and Nutrition Service, Agriculture

Agency: Department of Agriculture

┌──────┬──────────────────────┬───────────┬──────────────────────────┐

│ FY ┆ Budget Authority ($) ┆ Bill(s) ┆ Account Name(s) │

╞══════╪══════════════════════╪═══════════╪══════════════════════════╡

│ 2020 ┆ 23,615,098,000 ┆ H.R. 1865 ┆ Child Nutrition Programs │

│ 2021 ┆ 25,118,440,000 ┆ H.R. 133 ┆ Child Nutrition Programs │

│ 2022 ┆ 26,883,922,000 ┆ H.R. 2471 ┆ Child Nutrition Programs │

│ 2023 ┆ 28,545,432,000 ┆ H.R. 2617 ┆ Child Nutrition Programs │

│ 2024 ┆ 33,266,226,000 ┆ H.R. 4366 ┆ Child Nutrition Programs │

│ 2026 ┆ 37,841,674,000 ┆ H.R. 5371 ┆ Child Nutrition Programs │

└──────┴──────────────────────┴───────────┴──────────────────────────┘

6 fiscal years, 6 bills, 175,270,792,000 total

| Column | Meaning |

|---|---|

| FY | Federal fiscal year (Oct 1 – Sep 30). FY2024 = Oct 2023 – Sep 2024. |

| Budget Authority ($) | What Congress authorized the agency to obligate. This is budget authority, not outlays. |